Linkerd服务网格安装部署

王先森2023-04-242023-04-24

Linkerd 介绍

Linkerd 是 Kubernetes 的一个完全开源的服务网格实现。它通过为你提供运行时调试、可观测性、可靠性和安全性,使运行服务更轻松、更安全,所有这些都不需要对你的代码进行任何更改。

Linkerd 通过在每个服务实例旁边安装一组超轻、透明的代理来工作。这些代理会自动处理进出服务的所有流量。由于它们是透明的,这些代理充当高度仪表化的进程外网络堆栈,向控制平面发送遥测数据并从控制平面接收控制信号。这种设计允许 Linkerd 测量和操纵进出你的服务的流量,而不会引入过多的延迟。为了尽可能小、轻和安全,Linkerd 的代理采用 Rust 编写。

功能概述

自动 mTLS:Linkerd 自动为网格应用程序之间的所有通信启用相互传输层安全性 (TLS)。自动代理注入:Linkerd 会自动将数据平面代理注入到基于 annotations 的 pod 中。容器网络接口插件:Linkerd 能被配置去运行一个 CNI 插件,该插件自动重写每个 pod 的 iptables 规则。仪表板和 Grafana:Linkerd 提供了一个 Web 仪表板,以及预配置的 Grafana 仪表板。分布式追踪:您可以在 Linkerd 中启用分布式跟踪支持。故障注入:Linkerd 提供了以编程方式将故障注入服务的机制。高可用性:Linkerd 控制平面可以在高可用性 (HA) 模式下运行。HTTP、HTTP/2 和 gRPC 代理:Linkerd 将自动为 HTTP、HTTP/2 和 gRPC 连接启用高级功能(包括指标、负载平衡、重试等)。Ingress:Linkerd 可以与您选择的 ingress controller 一起工作。负载均衡:Linkerd 会自动对 HTTP、HTTP/2 和 gRPC 连接上所有目标端点的请求进行负载平衡。多集群通信:Linkerd 可以透明且安全地连接运行在不同集群中的服务。重试和超时:Linkerd 可以执行特定于服务的重试和超时。服务配置文件:Linkerd 的服务配置文件支持每条路由指标以及重试和超时。TCP 代理和协议检测:Linkerd 能够代理所有 TCP 流量,包括 TLS 连接、WebSockets 和 HTTP 隧道。遥测和监控:Linkerd 会自动从所有通过它发送流量的服务收集指标。流量拆分(金丝雀、蓝/绿部署):Linkerd 可以动态地将一部分流量发送到不同的服务。

架构

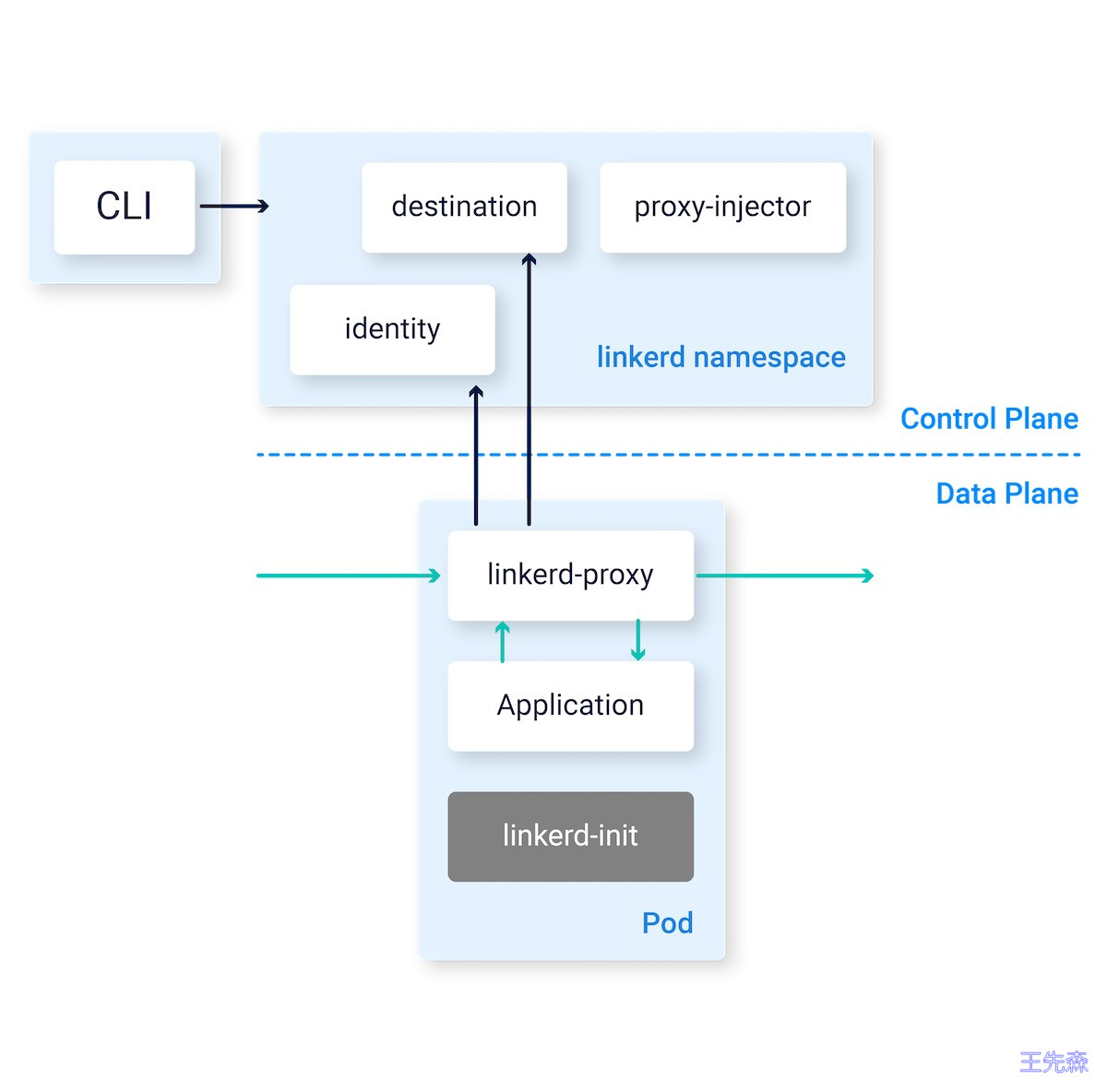

整体上来看 Linkerd 由一个控制平面和一个数据平面组成。

- 控制平面是一组服务,提供对

Linkerd整体的控制。 - 数据平面由在每个服务实例

旁边运行的透明微代理(micro-proxies)组成,作为 Pod 中的 Sidecar 容器运行,这些代理会自动处理进出服务的所有 TCP 流量,并与控制平面进行通信以进行配置。

此外 Linkerd 还提供了一个 CLI 工具,可用于控制平面和数据平面进行交互。

控制平面(control plane)

Linkerd 控制平面是一组在专用 Kubernetes 命名空间(默认为 linkerd)中运行的服务,控制平面有几个组件组成:

目标服务(destination):数据平面代理使用 destination 服务来确定其行为的各个方面。它用于获取服务发现信息;获取有关允许哪些类型的请求的策略信息;获取用于通知每条路由指标、重试和超时的服务配置文件信息和其它有用信息。身份服务(identity):identity 服务充当 TLS 证书颁发机构,接受来自代理的 CSR 并返回签名证书。这些证书在代理初始化时颁发,用于代理到代理连接以实现 mTLS。代理注入器(proxy injector):proxy injector 是一个 Kubernetes admission controller,它在每次创建 pod 时接收一个 webhook 请求。 此 injector 检查特定于 Linkerd 的 annotation(linkerd.io/inject: enabled)的资源。 当该 annotation 存在时,injector 会改变 pod 的规范, 并将 proxy-init 和 linkerd-proxy 容器以及相关的启动时间配置添加到 pod 中。

数据平面(data plane)

Linkerd 数据平面包含超轻型微代理,这些微代理部署为应用程序 Pod 内的 sidecar 容器。 由于由 linkerd-init(或者,由 Linkerd 的 CNI 插件)制定的 iptables 规则, 这些代理透明地拦截进出每个 pod 的 TCP 连接。

- 代理(Linkerd2-proxy):

Linkerd2-proxy是一个用 Rust 编写的超轻、透明的微代理。Linkerd2-proxy专为 service mesh 用例而设计,并非设计为通用代理。代理的功能包括:- HTTP、HTTP/2 和任意 TCP 协议的透明、零配置代理。

- HTTP 和 TCP 流量的自动 Prometheus 指标导出。

- 透明、零配置的 WebSocket 代理。

- 自动、延迟感知、第 7 层负载平衡。

- 非 HTTP 流量的自动第 4 层负载平衡。

- 自动 TLS。

- 按需诊断 Tap API。

- 代理支持通过 DNS 和目标 gRPC API 进行服务发现。

- Linkerd init 容器:

linkerd-init容器作为 Kubernetes 初始化容器添加到每个网格 Pod 中,该容器在任何其他容器启动之前运行。它使用 iptables 通过代理将所有 TCP 流量,路由到 Pod 和从 Pod 发出。

Linkerd 安装部署

Linkerd命令安装

我们可以通过在本地安装一个 Linkerd 的 CLI 命令行工具,通过该 CLI 可以将 Linkerd 的控制平面安装到 Kubernetes 集群上。

所以首先需要在本地运行 kubectl 命令,确保可以访问一个可用的 Kubernetes 集群,如果没有集群,可以使用 KinD 在本地快速创建一个。

kubectl version --short

Client Version: v1.23.6

Server Version: v1.23.6可以使用下面的命令在本地安装 Linkerd 的 CLI 工具:

$ curl --proto '=https' --tlsv1.2 -sSfL https://run.linkerd.io/install | sh如果是 Mac 系统,同样还可以使用 Homebrew 工具一键安装:

brew install linkerd同样直接前往 Linkerd Release 页面 https://github.com/linkerd/linkerd2/releases/ 下载安装即可。

安装后使用下面的命令可以验证 CLI 工具是否安装成功:

linkerd version

Client version: stable-2.13.1

Server version: unavailable正常我们可以看到 CLI 的版本信息,但是会出现 Server version: unavailable 信息,这是因为我们还没有在 Kubernetes 集群上安装控制平面造成的,所以接下来我们就来安装 Server 端。

Kubernetes 集群可以通过多种不同的方式进行配置,在安装 Linkerd 控制平面之前,我们需要检查并验证所有配置是否正确,要检查集群是否已准备好安装 Linkerd,可以执行下面的命令:

$ linkerd check --pre kubernetes-api -------------- √ can initialize the client √ can query the Kubernetes APIkubernetes-version

√ is running the minimum Kubernetes API version

pre-kubernetes-setup

√ control plane namespace does not already exist

√ can create non-namespaced resources

√ can create ServiceAccounts

√ can create Services

√ can create Deployments

√ can create CronJobs

√ can create ConfigMaps

√ can create Secrets

√ can read Secrets

√ can read extension-apiserver-authentication configmap

√ no clock skew detectedlinkerd-version

√ can determine the latest version

√ cli is up-to-date

Status check results are √

如果一切检查都 OK 则可以开始安装 Linkerd 的控制平面了,直接执行下面的命令即可一键安装:

# 新版需要先安装crd资源,才能执行安装linkerd

linkerd install --crds |kubectl apply -f -

linkerd install |kubectl apply -f -

在此命令中,linkerd install 会生成一个 Kubernetes 资源清单文件,其中包含所有必要的控制平面资源,然后使用 kubectl apply 命令即可将其安装到 Kubernetes 集群中。

可以看到会将 Linkerd 控制面安装到一个名为 linkerd 的命名空间之下,安装完成后会有如下几个 Pod 运行:

$ kubectl get pods -n linkerd

NAME READY STATUS RESTARTS AGE

linkerd-destination-7fcf6649dd-h2ztv 4/4 Running 0 92s

linkerd-identity-68d57d5-5trt8 2/2 Running 0 93s

linkerd-proxy-injector-759c487549-lqvjm 2/2 Running 0 92s安装完成后通过运行以下命令等待控制平面准备就绪,并可以验证安装结果是否正常:

$ linkerd check

kubernetes-api√ can initialize the client

√ can query the Kubernetes APIkubernetes-version

√ is running the minimum Kubernetes API version

linkerd-existence

√ 'linkerd-config' config map exists

√ heartbeat ServiceAccount exist

√ control plane replica sets are ready

√ no unschedulable pods

√ control plane pods are ready

√ cluster networks contains all pods

√ cluster networks contains all serviceslinkerd-config

√ control plane Namespace exists

√ control plane ClusterRoles exist

√ control plane ClusterRoleBindings exist

√ control plane ServiceAccounts exist

√ control plane CustomResourceDefinitions exist

√ control plane MutatingWebhookConfigurations exist

√ control plane ValidatingWebhookConfigurations exist

√ proxy-init container runs as root user if docker container runtime is usedlinkerd-identity

√ certificate config is valid

√ trust anchors are using supported crypto algorithm

√ trust anchors are within their validity period

√ trust anchors are valid for at least 60 days

√ issuer cert is using supported crypto algorithm

√ issuer cert is within its validity period

√ issuer cert is valid for at least 60 days

√ issuer cert is issued by the trust anchorlinkerd-webhooks-and-apisvc-tls

√ proxy-injector webhook has valid cert

√ proxy-injector cert is valid for at least 60 days

√ sp-validator webhook has valid cert

√ sp-validator cert is valid for at least 60 days

√ policy-validator webhook has valid cert

√ policy-validator cert is valid for at least 60 dayslinkerd-version

√ can determine the latest version

√ cli is up-to-datecontrol-plane-version

√ can retrieve the control plane version

√ control plane is up-to-date

√ control plane and cli versions matchlinkerd-control-plane-proxy

√ control plane proxies are healthy

√ control plane proxies are up-to-date

√ control plane proxies and cli versions match

Status check results are √

当出现上面的 Status check results are √ 信息后表示 Linkerd 的控制平面安装成功了。

Helm安装

除了使用 CLI 工具的方式安装控制平面之外,我们也可以通过 Helm Chart 的方式来安装,如下所示:

$ helm repo add linkerd https://helm.linkerd.io/stable

exp=(date -d '+8760 hour' +"%Y-%m-%dT%H:%M:%SZ")

# exp=(date -v+8760H +"%Y-%m-%dT%H:%M:%SZ") set expiry date one year from now, in Mac:

helm install linkerd2

--set-file identityTrustAnchorsPEM=ca.crt

--set-file identity.issuer.tls.crtPEM=issuer.crt

--set-file identity.issuer.tls.keyPEM=issuer.key

--set identity.issuer.crtExpiry=$exp

linkerd/linkerd2此外该 chart 包含一个

values-ha.yaml文件, 它覆盖了一些默认值,以便在高可用性场景下进行设置, 类似于linkerd install中的--ha选项。我们可以通过获取 chart 文件来获得values-ha.yaml

采用哪种方式进行安装均可,到这里我们现在就完成了 Linkerd 的安装,重新执行 linkerd version 命令就可以看到 Server 端版本信息了:

$ linkerd version

Client version: stable-2.13.1

Server version: stable-2.13.1Linkerd Viz 扩展安装部署

另外还有一个需要注意的是 viz 插件,在最新版本中已经没有内置 grafana 了,由于新版本已经没有内置 Grafana 了,我们安装的使用可以通过

--set grafana.url来指定外部的 Grafana 地址(如果是集群外的地址可以通过grafana.externalUrl参数指定)。通过dashboard.enforcedHostRegexp来指定允许那些域名、IP进行访问dashboard。

**官方参数介绍**:https://github.com/linkerd/linkerd2/blob/main/viz/charts/linkerd-viz/README.md

linkerd viz install --set grafana.externalUrl=http://grafana.od.com,dashboard.enforcedHostRegexp='^(localhost|127\.0\.0\.1|web\.linkerd-viz\.svc\.cluster\.local|web\.linkerd-viz\.svc|viz\.od\.com|\[::1\])(:\d+)?'|kubectl apply -f -</code></pre></div></div><p>安装完成后通过运行以下命令等待Viz 扩展平面准备就绪,并可以验证安装结果是否正常:</p><div class="rno-markdown-code"><div class="rno-markdown-code-toolbar"><div class="rno-markdown-code-toolbar-info"><div class="rno-markdown-code-toolbar-item is-type"><span class="is-m-hidden">代码语言:</span>javascript</div></div><div class="rno-markdown-code-toolbar-opt"><div class="rno-markdown-code-toolbar-copy"><i class="icon-copy"></i><span class="is-m-hidden">复制</span></div></div></div><div class="developer-code-block"><pre class="prism-token token line-numbers language-javascript"><code class="language-javascript" style="margin-left:0"> linkerd check

kubernetes-api√ can initialize the client

√ can query the Kubernetes APIkubernetes-version

√ is running the minimum Kubernetes API version

linkerd-existence

√ 'linkerd-config' config map exists

√ heartbeat ServiceAccount exist

√ control plane replica sets are ready

√ no unschedulable pods

√ control plane pods are ready

√ cluster networks contains all pods

√ cluster networks contains all serviceslinkerd-config

√ control plane Namespace exists

√ control plane ClusterRoles exist

√ control plane ClusterRoleBindings exist

√ control plane ServiceAccounts exist

√ control plane CustomResourceDefinitions exist

√ control plane MutatingWebhookConfigurations exist

√ control plane ValidatingWebhookConfigurations exist

√ proxy-init container runs as root user if docker container runtime is usedlinkerd-identity

√ certificate config is valid

√ trust anchors are using supported crypto algorithm

√ trust anchors are within their validity period

√ trust anchors are valid for at least 60 days

√ issuer cert is using supported crypto algorithm

√ issuer cert is within its validity period

√ issuer cert is valid for at least 60 days

√ issuer cert is issued by the trust anchorlinkerd-webhooks-and-apisvc-tls

√ proxy-injector webhook has valid cert

√ proxy-injector cert is valid for at least 60 days

√ sp-validator webhook has valid cert

√ sp-validator cert is valid for at least 60 days

√ policy-validator webhook has valid cert

√ policy-validator cert is valid for at least 60 dayslinkerd-version

√ can determine the latest version

√ cli is up-to-datecontrol-plane-version

√ can retrieve the control plane version

√ control plane is up-to-date

√ control plane and cli versions matchlinkerd-control-plane-proxy

√ control plane proxies are healthy

√ control plane proxies are up-to-date

√ control plane proxies and cli versions matchlinkerd-viz

√ linkerd-viz Namespace exists

√ can initialize the client

√ linkerd-viz ClusterRoles exist

√ linkerd-viz ClusterRoleBindings exist

√ tap API server has valid cert

√ tap API server cert is valid for at least 60 days

√ tap API service is running

√ linkerd-viz pods are injected

√ viz extension pods are running

√ viz extension proxies are healthy

√ viz extension proxies are up-to-date

√ viz extension proxies and cli versions match

√ prometheus is installed and configured correctly

√ viz extension self-check

Status check results are √

此外我们也可以通过 Ingress 来暴露 viz 服务,创建如下所示的资源对象:

cat > viz-ing.yaml <<EOF

apiVersion: traefik.containo.us/v1alpha1

kind: Middleware

metadata:

name: websocket

namespace: linkerd-viz

spec:

headers: # 配置websocket访问

customRequestHeaders:

Connection: keep-alive, Upgrade

Upgrade: WebSocket

apiVersion: traefik.containo.us/v1alpha1

kind: IngressRoute

metadata:

name: linkerd-dashboard

namespace: linkerd-viz

annotations:

ingress.kubernetes.io/custom-request-headers: l5d-dst-override:web.linkerd-viz.svc.cluster.local:8084

spec:

entryPoints:

- web

routes: - match: Host(

viz.od.com) # 指定域名

kind: Rule

services:- name: web

port: 8084

- name: web

match: Host(viz.od.com) && Path(/api/tap)

middlewares:- name: websocket

namespace: linkerd-viz

kind: Rule

services: name: web

port: 8084

EOF

- name: websocket

浏览器打开https://viz.od.com/

在对应的资源后面包含一个 Grafana 的图标,点击可以自动跳转到 Grafana 的监控页面。

grafana部署yaml

官方文档:https://linkerd.io/2.13/tasks/grafana/index.html

cat > grafana.yaml <<EOFSource: grafana/templates/serviceaccount.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

app.kubernetes.io/version: "9.4.7"

name: grafana

namespace: infraSource: grafana/templates/configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: grafana

namespace: infra

labels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

app.kubernetes.io/version: "9.4.7"

data:

grafana.ini: |

[analytics]

check_for_updates = false

[auth]

disable_login_form = true

[auth.anonymous]

enabled = true

org_role = Editor

[auth.basic]

enabled = false

[grafana_net]

url = https://grafana.net

[log]

mode = console

[log.console]

format = text

level = info

[panels]

disable_sanitize_html = true

[paths]

data = /var/lib/grafana/

logs = /var/log/grafana

plugins = /var/lib/grafana/plugins

provisioning = /etc/grafana/provisioning

[server]

domain = ''

root_url = %(protocol)s://%(domain)s/grafana/

serve_from_sub_path = true

datasources.yaml: |

apiVersion: 1

datasources:

- access: proxy

editable: true

isDefault: true

jsonData:

timeInterval: 5s

name: prometheus

orgId: 1

type: prometheus

url: http://prometheus.linkerd-viz.svc.cluster.local:9090

dashboardproviders.yaml: |

apiVersion: 1

providers:

- disableDeletion: false

editable: true

folder: ""

name: default

options:

path: /var/lib/grafana/dashboards/default

orgId: 1

type: file

download_dashboards.sh: |

#!/usr/bin/env sh

grafana_dir="/var/lib/grafana/dashboards/default"if [ ! -d "\$grafana_dir" ]; then mkdir -p "\$grafana_dir" fi download_dashboard() { local url="\$1" local file="\$2" if [ ! -f "\$file" ]; then echo "Downloading \$url..." curl -skf --connect-timeout 60 --max-time 60 \ -H "Accept: application/json" \ -H "Content-Type: application/json;charset=UTF-8" \ "\$url" | sed '/-- .* --/! s/"datasource":.*,/"datasource": "prometheus",/g' \ > "\$file" else echo "File \$file already exists" fi } # Download dashboards 如果文件存在而不会下载,不存在就会下载 download_dashboard "https://grafana.com/api/dashboards/15482/revisions/2/download" \ "\$grafana_dir/authority.json" download_dashboard "https://grafana.com/api/dashboards/15483/revisions/2/download" \ "\$grafana_dir/cronjob.json" download_dashboard "https://grafana.com/api/dashboards/15484/revisions/2/download" \ "\$grafana_dir/daemonset.json" download_dashboard "https://grafana.com/api/dashboards/15475/revisions/5/download" \ "\$grafana_dir/deployment.json" download_dashboard "https://grafana.com/api/dashboards/15486/revisions/2/download" \ "\$grafana_dir/health.json" download_dashboard "https://grafana.com/api/dashboards/15487/revisions/2/download" \ "\$grafana_dir/job.json" download_dashboard "https://grafana.com/api/dashboards/15479/revisions/2/download" \ "\$grafana_dir/kubernetes.json" download_dashboard "https://grafana.com/api/dashboards/15488/revisions/2/download" \ "\$grafana_dir/multicluster.json" download_dashboard "https://grafana.com/api/dashboards/15478/revisions/2/download" \ "\$grafana_dir/namespace.json" download_dashboard "https://grafana.com/api/dashboards/15477/revisions/2/download" \ "\$grafana_dir/pod.json" download_dashboard "https://grafana.com/api/dashboards/15489/revisions/2/download" \ "\$grafana_dir/prometheus.json" download_dashboard "https://grafana.com/api/dashboards/15490/revisions/2/download" \ "\$grafana_dir/prometheus-benchmark.json" download_dashboard "https://grafana.com/api/dashboards/15491/revisions/2/download" \ "\$grafana_dir/replicaset.json" download_dashboard "https://grafana.com/api/dashboards/15492/revisions/2/download" \ "\$grafana_dir/replicationcontroller.json" download_dashboard "https://grafana.com/api/dashboards/15481/revisions/2/download" \ "\$grafana_dir/route.json" download_dashboard "https://grafana.com/api/dashboards/15480/revisions/2/download" \ "\$grafana_dir/service.json" download_dashboard "https://grafana.com/api/dashboards/15493/revisions/2/download" \ "\$grafana_dir/statefulset.json" download_dashboard "https://grafana.com/api/dashboards/15474/revisions/3/download" \ "\$grafana_dir/top-line.json"

Source: grafana/templates/dashboards-json-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: grafana-dashboards-default

namespace: infra

labels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

app.kubernetes.io/version: "9.4.7"

dashboard-provider: default

data:

{}Source: grafana/templates/clusterrole.yaml

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

app.kubernetes.io/version: "9.4.7"

name: grafana-clusterrole

rules: []Source: grafana/templates/clusterrolebinding.yaml

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: grafana-clusterrolebinding

labels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

app.kubernetes.io/version: "9.4.7"

subjects:

- kind: ServiceAccount

name: grafana

namespace: infra

roleRef:

kind: ClusterRole

name: grafana-clusterrole

apiGroup: rbac.authorization.k8s.io

Source: grafana/templates/role.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: grafana

namespace: infra

labels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

app.kubernetes.io/version: "9.4.7"

rules: []Source: grafana/templates/rolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: grafana

namespace: infra

labels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

app.kubernetes.io/version: "9.4.7"

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: grafana

subjects:

- kind: ServiceAccount

name: grafana

namespace: infra

Source: grafana/templates/service.yaml

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: infra

labels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

app.kubernetes.io/version: "9.4.7"

spec:

type: ClusterIP

ports:

- name: service

port: 80

protocol: TCP

targetPort: 3000

selector:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafanaSource: grafana/templates/deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: grafana

namespace: infra

labels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

app.kubernetes.io/version: "9.4.7"

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

template:

metadata:

labels:

app.kubernetes.io/name: grafana

app.kubernetes.io/instance: grafana

annotations:

linkerd.io/inject: enabled

spec:

nodeName: k8s-node1

serviceAccountName: grafana

automountServiceAccountToken: true

securityContext:

runAsUser: 0

initContainers: # 通过初始化认为下载所需要的dashboards

- name: download-dashboards

image: "docker.io/curlimages/curl:7.85.0"

imagePullPolicy: IfNotPresent

command: ["/bin/sh"]

args: [ "-c", "mkdir -p /var/lib/grafana/dashboards/default && /bin/sh -x /etc/grafana/download_dashboards.sh" ]

volumeMounts:

- name: config

mountPath: "/etc/grafana/download_dashboards.sh"

subPath: download_dashboards.sh

- name: storage

mountPath: "/var/lib/grafana"

enableServiceLinks: true

containers:

- name: grafana

image: "docker.io/grafana/grafana:9.4.7"

imagePullPolicy: IfNotPresent

volumeMounts:

- name: config

mountPath: "/etc/grafana/grafana.ini"

subPath: grafana.ini

- name: storage

mountPath: "/var/lib/grafana"

- name: config

mountPath: "/etc/grafana/provisioning/datasources/datasources.yaml"

subPath: "datasources.yaml"

- name: config

mountPath: "/etc/grafana/provisioning/dashboards/dashboardproviders.yaml"

subPath: "dashboardproviders.yaml"

ports:

- name: grafana

containerPort: 3000

protocol: TCP

env:

- name: POD_IP

valueFrom:

fieldRef:

fieldPath: status.podIP

- name: GF_PATHS_DATA

value: /var/lib/grafana/

- name: GF_PATHS_LOGS

value: /var/log/grafana

- name: GF_PATHS_PLUGINS

value: /var/lib/grafana/plugins

- name: GF_PATHS_PROVISIONING

value: /etc/grafana/provisioning

livenessProbe:

failureThreshold: 10

httpGet:

path: /api/health

port: 3000

initialDelaySeconds: 60

timeoutSeconds: 30

readinessProbe:

httpGet:

path: /api/health

port: 3000

volumes:

- name: config

configMap:

name: grafana

- name: dashboards-default

configMap:

name: grafana-dashboards-default

- name: storage # 挂载到本地

hostPath:

path: /data/volumes/grafana

EOF

通过traefik实现外部访问

cat > k8s-yaml/grafana/ing.yaml <EOF

apiVersion: traefik.containo.us/v1alpha1

kind: IngressRoute

metadata:

name: grafana-web

namespace: infra

spec:

entryPoints:

- web

routes: match: Host(grafana.od.com) # 指定域名

kind: Rule

services:name: grafana

port: 80

EOF